What If the Future of AI Isn’t LLMs at All?

For the past few years, Large Language Models (LLMs) have defined the AI revolution. From chatbots to copilots, from content generation to coding assistants—LLMs have become the default interface between humans and machines.

But what if this is just the beginning?

What if LLMs are not the final form of AI—but merely a stepping stone?

A growing number of researchers, including pioneers like Yann LeCun, believe the next breakthrough won’t come from bigger models or better prompts. Instead, it will come from something fundamentally different:

World Models.

The Limits of Today’s AI

LLMs are powerful—but they have clear limitations:

- They predict text, not reality

- They lack true understanding of the physical world

- They don’t maintain persistent memory like humans

- They struggle with long-term reasoning and planning

In simple terms, LLMs are incredibly good at sounding intelligent—but not always at being intelligent.

They operate on patterns, not experience.

And that’s a critical gap.

Enter “World Models”

World models aim to solve this.

Instead of predicting the next word, they aim to:

- Understand how the real world works

- Build internal simulations of environments

- Learn from continuous interaction

- Maintain memory over time

Think of it like this:

LLMs = Language prediction

World Models = Reality simulation

This is a shift from “talking AI” → “thinking AI”

Why This Matters

If AI can simulate and understand the world, everything changes.

1. Robotics Becomes Truly Intelligent

Robots won’t just follow instructions—they’ll understand environments.

- Navigate unfamiliar spaces

- Adapt to unexpected situations

- Learn like humans do

2. AI Moves Beyond Screens

Today’s AI lives in chat windows.

World models enable AI to operate in:

- Manufacturing

- Healthcare

- Autonomous systems

- Wearables

3. Memory Becomes Native

Instead of resetting every conversation, AI can:

- Remember context over months or years

- Build personalized understanding

- Improve continuously

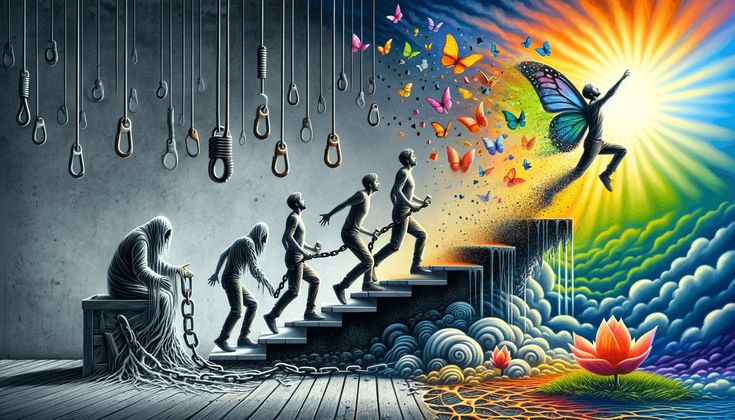

The End of the “Prompt Era”?

Right now, interacting with AI requires skill:

- Prompt engineering

- Iteration

- Refinement

But with world models:

- AI understands intent naturally

- Context is persistent

- Interaction becomes seamless

You don’t instruct AI.

You collaborate with it.

Why LLMs Alone May Not Be Enough

Scaling LLMs has diminishing returns:

- More data ≠ deeper understanding

- Bigger models ≠ better reasoning

- Higher cost ≠ sustainable progress

At some point, simply making models larger stops delivering breakthroughs.

The next leap requires a new paradigm, not just more compute.

The Hybrid Future

This doesn’t mean LLMs disappear.

More likely, we’ll see:

- LLMs for communication

- World models for reasoning and action

Together, they form:

A system that can understand, communicate, and act

The Bigger Picture

We may be witnessing a transition similar to:

- From search engines → smartphones

- From software → cloud computing

LLMs were the breakthrough that made AI accessible.

But world models could be the breakthrough that makes AI truly intelligent.

Final Thought

If LLMs taught machines how to talk,

world models may teach them how to understand.

And the moment AI begins to understand the world—not just describe it—

That’s when everything changes.

Responses